Subscriber Benefit

As a subscriber you can listen to articles at work, in the car, or while you work out. Subscribe NowThe use of artificial intelligence has created a world of copyright-related questions for creators and legal experts—and many of these questions, at least for now, have no clear answers.

“The interest on the legal side of AI has exploded,” said Indianapolis attorney David Wong, who practices intellectual property law as a partner at Barnes & Thornburg LLP. “Everybody’s paying attention, everybody’s aware, and now everybody has questions.”

Among them: When would AI-generated material that relies on previous human-created and copyrighted works actually violate a copyright? And at what point would new AI-generated work aided by humans qualify for a copyright?

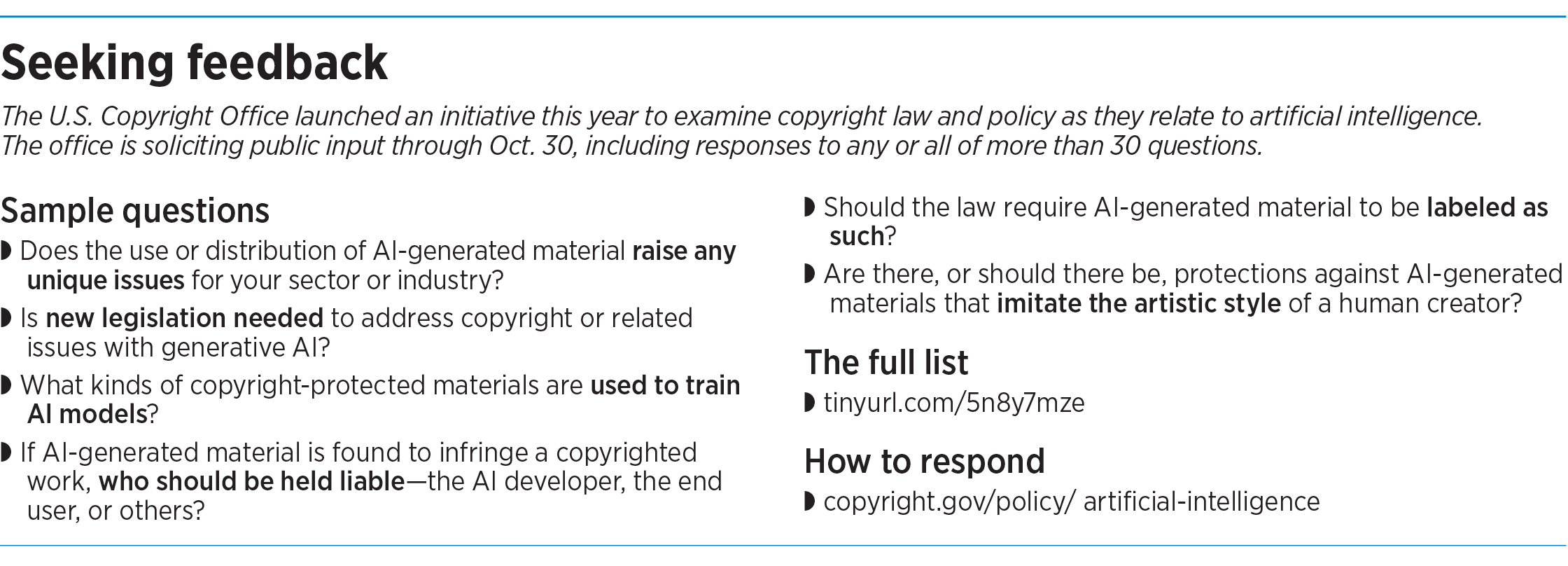

In an effort to gain some clarity, the U.S. Copyright Office is seeking public feedback on a wide range of questions about AI-related copyright issues. The comments period, which runs through Oct. 30, is part of a larger effort the Copyright Office launched in March.

“This initiative is in direct response to the recent striking advances in generative AI technologies and their rapidly growing use by individuals and businesses,” the Copyright Office said in announcing the initiative, adding that it had already begun receiving copyright applications for works that include AI-generated content.

As currently written, U.S. copyright law protects original works ranging from art and literature to sound recordings, computer software, movies and more. Works must be created by a human author, so it’s generally accepted that a work that was totally generated by AI cannot be copyrighted, local attorneys say.

But it’s not so clear how the law applies to other AI-related copyright questions.

For instance: If a person uses AI-generated material as a starting point and adds his or her own creative elements—how much human input would be required before the work qualifies for a copyright?

“That’s the $64,000 question,” said Paul Overhauser, a Greenfield attorney who specializes in intellectual property law and also has an undergraduate degree in computer science.

Another big question: Since AI models are trained on vast amounts of data—presumably some of which is copyright-protected, what copyright issues might arise from using AI-generated materials?

“I think for most of our clients, they’re wondering, ‘If I use generative AI to generate an image, if I’m going to use an image in marketing, am I allowed to use that image, or do I risk being sued or having an allegation of copyright infringement?’” said intellectual property attorney Joseph Balthazor, an associate in the Minneapolis office of Taft Stettinius & Hollister LLP. “And that’s a pretty important question, and right now it’s not settled.”

Early test cases

The attorneys said they’re not aware of any AI-related copyright litigation pending in Indiana courts. But elsewhere, several copyright lawsuits are currently making their way through the legal system.

Last November, two anonymous plaintiffs filed suit against Github Inc., Microsoft Corp. and OpenAI Inc. in federal court in California. The plaintiffs allege that the defendants improperly used open-source code to train Github’s Copilot system, an AI tool that can generate computer code.

In January, three visual artists in Oregon, Tennessee and California sued Stability AI, Midjourney Inc. and Deviantart Inc. The lawsuit, filed in federal court in California, alleges that the defendants used the plaintiffs’ copyrighted images without permission to train their image-based generative AI tools.

In July, comedian Sarah Silverman and two other plaintiffs sued OpenAI Inc. in federal court in California, alleging that OpenAI infringed their copyrights by including their books in the company’s training data sets for its large-language models, including GPT-4.

All three of these cases are pending.

In August, a federal court in Washington, D.C., affirmed the U.S. Copyright Office’s decision to deny a copyright application filed for a piece of AI-generated art. The plaintiff in that case, computer scientist Stephen Thaler, has indicated he plans to appeal, Reuters reported in August.

The proliferation of court cases convinced the Indy Arts Council’s director of public art, Julia Muney Moore, to add a question about artificial intelligence to the arts council’s Sidewalk Gallery program, which features local art on vacant downtown storefronts.

In this program, the arts council licenses the use of a photograph of a local artist’s work. The artist’s copyright on the original artwork is what gives him or her the ability to grant a license to the arts council, Moore said. But if the piece was totally generated by AI, and thus not eligible for a copyright, the arts council wouldn’t be able to license it, she said.

So for this round of Sidewalk Gallery applications, the arts council included a question asking whether the artist used AI in the piece’s creation, Moore said. A “yes” answer would prompt the arts council to seek information about how much of a role AI played, she said. (The application deadline was Oct. 15, and Moore said none of the applicants answered “yes” to the AI question.)

“I think AI is a wonderful tool, but it opens a lot of issues,” she said. “I think artists are still kind of wrestling with how to use this new tool and how to use it safely.”

Local creators

Hoosier creators are taking a variety of approaches to the technology.

Painter Caleb Harris Weintraub, an associate professor of painting at Indiana University’s Eskenazi School of Art, Architecture and Design in Bloomington, said he’s found generative AI to be a helpful tool for brainstorming, among other uses.

Weintraub and colleague David Ondrik, a lecturer in photography at the Eskenazi School, developed a studio art class, AI in the Studio, that was offered in the spring 2023 semester. Weintraub said he’s working to get approval to offer the class again for the fall 2024 semester.

He’s used generative AI tools to help him refine initial concepts, he said. The technology can offer artistic interpretations, giving him ideas for how he might develop a preliminary sketch, for instance. Or it can suggest different variations on a concept, allowing Weintraub to visualize various options without doing so much of the labor himself, speeding up the creative process.

In using AI, he said, “I feel that I can fail more without having serious repercussions. And so that gives me a lot of room to experiment.”

Weintraub said he also sees down sides to the technology, particularly in cases where the AI is creating the entire work.

“There are problems with it, because it’s scraping information from the Internet, which includes artwork that people have not approved being incorporated into the data sets. There’s a wide range of problems that I have with [generative AI]. And some of it is not a legal problem. It’s an ethical issue for me. And I think that there’s a gap there, because even if it’s legislated so that it approves or allows for a fair degree of AI use in the arts, that doesn’t mean that it’s optimal, doesn’t mean that it’s OK, that it’s right.”

Indianapolis author Maurice Broaddus said he’s paying attention to the issue as well.

“It’s definitely a topic I keep my eye on,” said Broaddus, a sci-fi and fantasy author whose works include the 2019 novel “Pimp My Airship,” which won an Indiana Authors Award; and the 2020 novella “Sorcerers,” for which AMC Networks has purchased the adaptation rights.

Broaddus said he doesn’t see generative AI as a threat to his craft, because it can only draw from existing information. “But I’m writing something new. And I’ve grown since the last things I wrote. It can imitate up to a point, so it’s in a spot of, it can imitate but it can’t innovate.”

Broaddus said he’s played around with the technology—and has even instructed generative AI to create works that mimic his style. He wasn’t impressed with the result, calling it “hot garbage.”

But Broaddus also concedes that the technology is quickly advancing, and he might have a different feeling about it as its capabilities evolve. “I could be having a different conversation with you three years from now.”•

Please enable JavaScript to view this content.