Subscriber Benefit

As a subscriber you can listen to articles at work, in the car, or while you work out. Subscribe NowGovernment agencies have started to study the impact and potential implications of emerging artificial intelligence—which means new regulations around AI could be on the horizon.

But local tech founders and funders have diverse and nuanced opinions on whether new laws governing AI are necessary or whether effective legislation of the technology is even possible.

So far, the Indiana Legislature has not taken any action on AI, and as of Wednesday, no bills had been filed for the 2024 legislative session. Jennifer Hallowell, president of the Indiana Technology and Innovation Association, a tech-industry lobbying group, said last week she was not aware of any legislators who plan to introduce AI legislation in the coming session, which begins in January.

A bipartisan study committee held an AI-focused meeting in late October, though it was mostly intended to educate lawmakers on the topic of AI, its uses and potential benefits and risks.

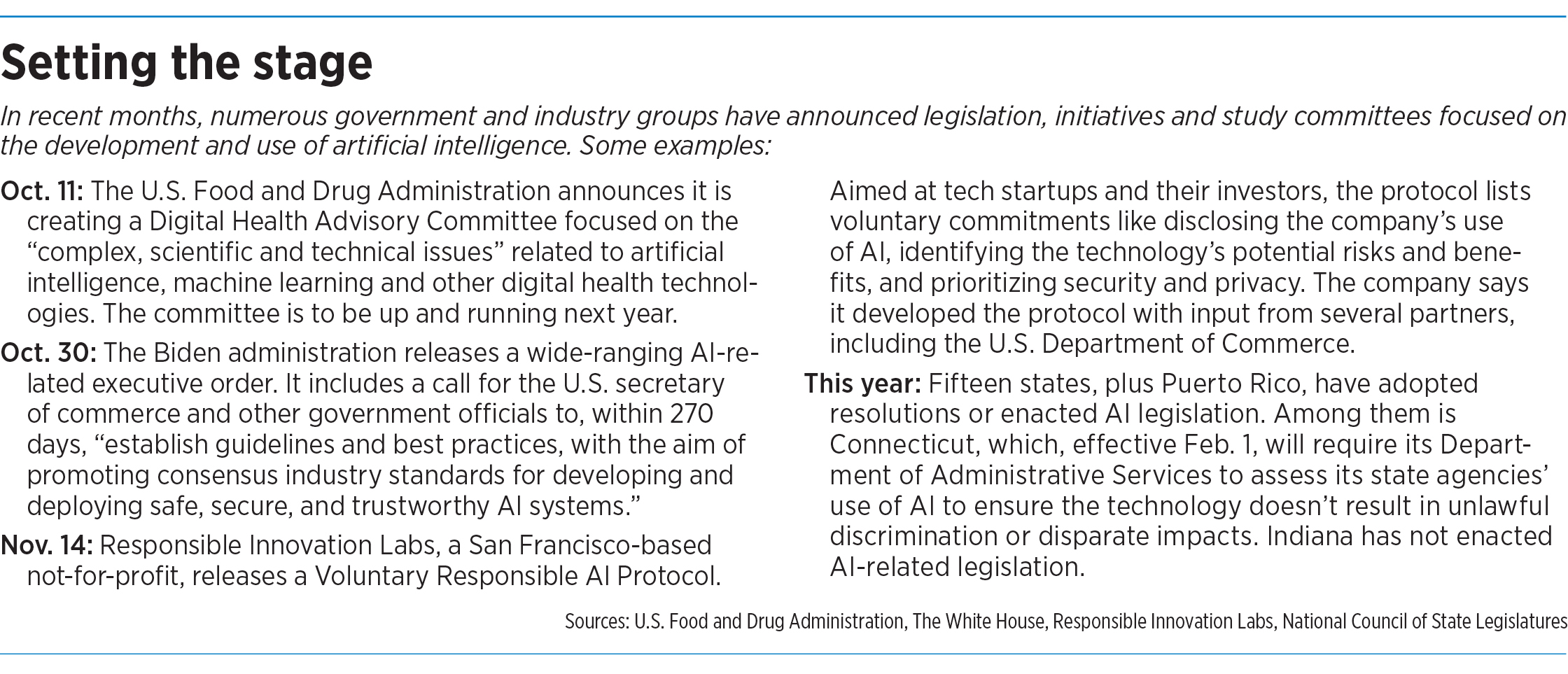

However, other entities have taken steps toward regulating AI.

“It’s happening slowly, but it’s definitely happening,” said Brad Bostic, founder and CEO of Indianapolis-based health software company Hc1 Insights. Bostic is also managing director at Indianapolis-based Health Cloud Capital, which invests in health-tech companies that use AI and machine learning to analyze data.

On Oct. 30, the Biden administration released an executive order on AI. The wide-ranging document included a call for the U.S. secretary of commerce and other government officials to, within 270 days, “establish guidelines and best practices, with the aim of promoting consensus industry standards for developing and deploying safe, secure, and trustworthy AI systems.”

Also in October, the U.S. Food and Drug Administration announced it would create a Digital Health Advisory Committee that focuses on the “complex, scientific and technical issues” related to AI and other technologies. The FDA says it intends to have the committee up and running next year.

In November, a San Francisco-based not-for-profit called Responsible Innovation Labs released what it calls a Voluntary Responsible AI Protocol. The document, aimed at tech startups and their investors, encourages the adoption of voluntary commitments around AI disclosures, risk/benefit analysis, security and other concerns. So far, the organization says, more than 50 tech investors and funds have adopted the commitments. (As of early this week, the list included no Indiana-based signatories.)

Also in November, the Federal Trade Commission announced it had approved a resolution that will make it easier for the agency to compel discovery in AI-related investigations.

Members of Congress have introduced numerous AI-related bills, including legislation introduced Nov. 15 called the Artificial Intelligence Research, Innovation and Accountability Act of 2023, sponsored by Sen. John Thune, R-South Dakota, and co-sponsored by a bipartisan group of five other senators.

And, according to the National Conference of State Legislators, at least 25 states have introduced AI-related bills this year, while 15 states and

Puerto Rico have adopted resolutions or enacted legislation around some aspect of the technology.

Bostic said the FDA has already granted approval for more than 100 medical devices that incorporate AI or machine learning in some way. The agency doesn’t yet have specific regulations focused on AI, he said, but added that the FDA and its European counterpart, the European Union Medical Device Regulation, are likely moving in that direction.

Specifically, Bostic said, he believes the FDA will develop rules around the AI and machine-learning models that are incorporated into medical devices. If a device uses AI to diagnose a patient, for instance, has the AI model been trained on 10 patients or 5,000?

“We have to ensure that the data sets used to train these models are providing this robust and more general clinical model that can be trusted beyond just a small group,” he said.

Regulation: pros and cons

In general, Bostic said, he favors a free-market approach to business, but “if there’s something that’s going to be used to drive a patient-care decision that has life-changing, [life]-altering consequences, that should be something the regulators are reviewing.”

Calvin Hendryx-Parker, co-founder and chief technology officer at Fishers-based software developer Six Feet Up Inc., said that he is bullish on AI’s ability to create jobs and opportunities, and that excessive regulation could stifle innovation and lead to a “neutered version” of the technology.

Yet Hendryx-Parker also said some guardrails around AI are necessary.

President Biden’s executive order directs federal agencies to, among other steps, address and prevent discrimination resulting from the use of AI in areas such as hiring, consumer finance and criminal justice.

Hendryx-Parker said he, too, worries about those possibilities. “We already have a problem with systemic racism in this world,” he said. “This [use of AI] could only pile on and exacerbate that problem.”

At the same time, he acknowledged, eliminating bias from the use of AI might be impossible if underlying societal biases aren’t addressed first.

Hendryx-Parker isn’t alone in being wary of AI regulation.

Christopher Day, CEO of Indianapolis-based Elevate Ventures, said the central feature of AI—that it can learn and evolve over time—makes trying to regulate the technology especially tricky.

“On a general level, it is a bad idea for legislators to think they can legislate AI,” Day said. “There are a lot of things you can control in life. [AI] is something you cannot control.”

Elevate Ventures, a not-for-profit, invests in Indiana startups and serves as the venture funding arm of the Indiana Economic Development Corp.

Day said regulating AI might further concentrate power in the hands of big tech companies. “It would be devastating to startups and early-stage and growth companies,” he said.

Transparency requirements that forced companies to disclose their use of AI could also be problematic, Day said. If a company uses AI in its products, for instance, mandated disclosure of that fact could have the unintended consequence of revealing a company’s trade secrets.

Too early? Too late?

Competitive concerns are also on the mind of Kristian Andersen, a partner at Indianapolis venture studio High Alpha.

Andersen describes himself as a person with “strong libertarian leanings” who also believes that “some regulation is absolutely required for a well-functioning economy and society.”

But Andersen said regulations work best when they are created in response to problems—not when they are crafted as protection from situations that might or might not come to pass.

“This is the nature of innovation,” he said. “You’ve got to let some of this happen and then respond to it.”

As AI becomes more accessible and more powerful—maybe in five to 10 years, Andersen said—that will be the time for regulation. But not yet.

He offered the FDA as an example. The agency’s modern roots trace to the passage of the Pure Food and Drugs Act of 1906. According to the FDA’s own website, that law was the result of a quarter-century’s worth of effort to “rein in long-standing, serious abuses in the consumer product marketplace.”

The FDA’s regulatory role is important, Andersen said, but it emerged over time. “Were people selling snake oil? Were people putting cocaine in children’s cough syrup? Yes. And so, thank goodness, the FDA ultimately emerged as a way to rationalize those markets and protect consumers.”

Trying to set too many regulations too early, Andersen said, can stifle competition and put America behind other countries that don’t have the same regulations.

Day wonders whether it’s already too late to try to regulate AI. “The cat’s already so far out of the bag,” he said.

One point the tech founders and funders can agree on is that, if legislation is to happen, it should be on the federal and/or international level. Having each state enact its own set of rules would be untenable, they said.

“This is certainly not a place for patchwork legislation,” Bostic said.

The technology association also favors a federal approach. At its annual legislative preview event last week, the organization unveiled its policy priorities for the General Assembly’s 2024 session. Among those priorities is to encourage policymaker education on the topic of AI and advocate for what the group calls “balanced AI regulation” that considers safety and security needs without inhibiting innovation and technological advancement.

The association also prefers that any AI regulations happen on the global or federal level to provide more consistency and ease compliance burdens, Hallowell said.

Senate President Pro Tem Rod Bray, R-Martinsville, said he’s not sure whether any AI-focused bills will be introduced in the 2024 session but that he doesn’t see the topic as a top legislative priority.

“To do [AI] legislation on a state level is tricky,” Bray said. “We want to be cognizant of not limiting what AI does.”•

Please enable JavaScript to view this content.

Lawmakers can’t regulate AI, and they likely can’t even produce a meaningful definition of “AI” which could be regulated.